Earlier this year I wrote a two part series called CI In The Age Of Containers - Part 1 & Part 2. My original goal was to explore the impact container might have on the build process. My thinking was there would be a profound impact that would shake up what I knew about building deliverables which I had done for years before containers came on the scene. What I learned was that it didn't change the process so much but it did have a big impact on overall testing which could now include compliance and security at build time. The combination of converged supply chains along with unprecedented visibility and automated tooling make for some very exciting times.

In Part 1 we started with the build of the deliverable, in this the Webgoat project (my fork) which as of version 8 is a spring boot app in a container. The build process is a two step process, build the JAR file and then build the container and put the JAR in it. Containers are easy to run so 'deploying' the app for testing is a docker run command away. That pulls a lot of traditional testing into the build phase of a CI pipeline beyond just unit testing of yesteryear. More testing means more opportunities to throw away things that don't pass all of the test. Instead of publishing every build to my Nexus Repo with a unique version just so I can deploy it to a test environment meant we had a lot of binaries to clean up later. A good example or how getting feedback earlier can drive waste out of a process.

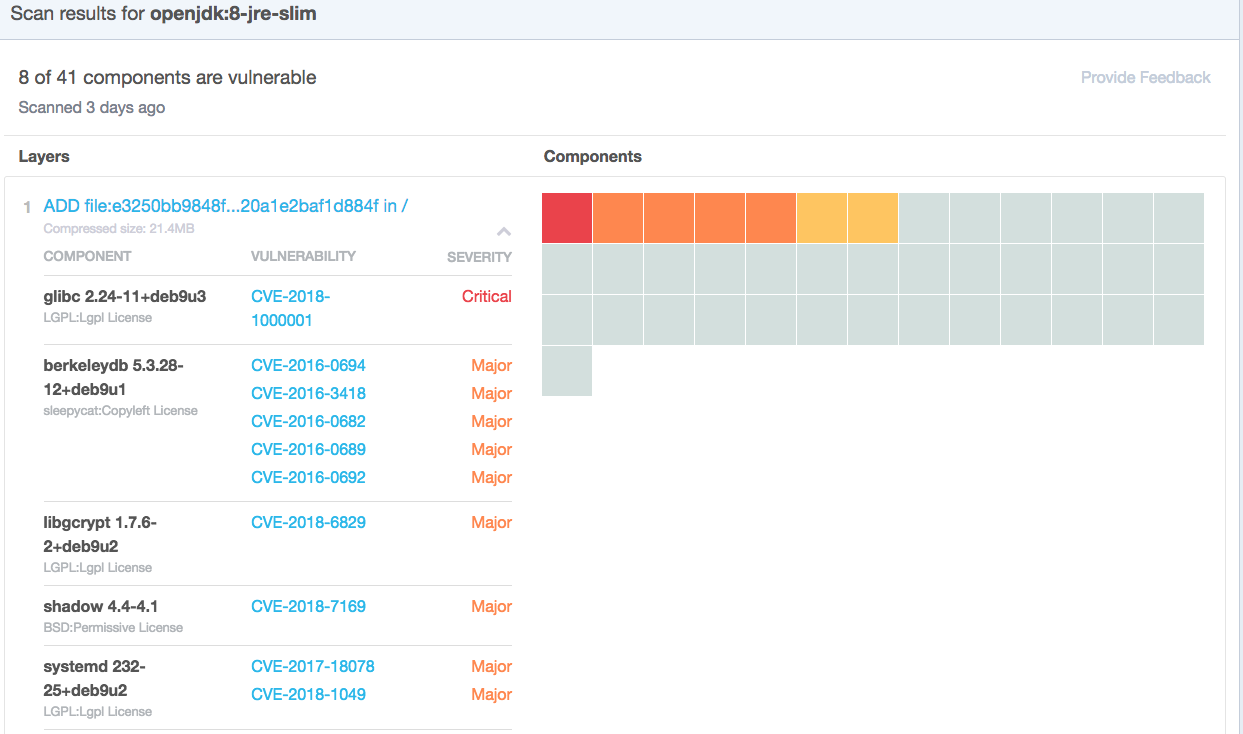

In Part 2 we explored how container create a converged supply chain with all changes flowing through the CI pipeline. Images that are created with reliable and repeatable process in the first place that can also be audited with modern tools like Chef Inspec and our own Nexus Lifecycle. What's really exciting though is to see the suppliers getting this same kind of visibility back in DockerHub and Github. Our Webgoat project is being built on top the the OpenJDK:8-jre-slim image so let's look at the tags page for that back on Dockerhub.

This is simply amazing to me that our suppliers now have the ability to be transparent giving us consumers the ability to make an informed choice. While the Webgoat project is intentionally insecure and might even leverage these issues, for everyday work we'd want to be able to do something about it. In this case the upstream project could simply rebuild which would likely patch everything we see here in the base layer. If our supplier isn't doing this, our test are going to fail and we could, if needed, take matters into our own hands and tun the update ourselves. In Alpine that would look something like this being added to our docker file:

apk add --update "$@" && rm -rf /var/cache/apk/*

This runs an update against the system and then flushes the cache to avoid any unnecessary container bloat. Now, everytime we build we'd get the latest packages available, hopefully addressing all of the issues above. Similarly, Github recently added security scanning to their source code repos as well and recently announced they had found over 4 million issues! This type of source scanning is less reliable (perhaps a future blog to explore that) but does push visibility even further upstream.

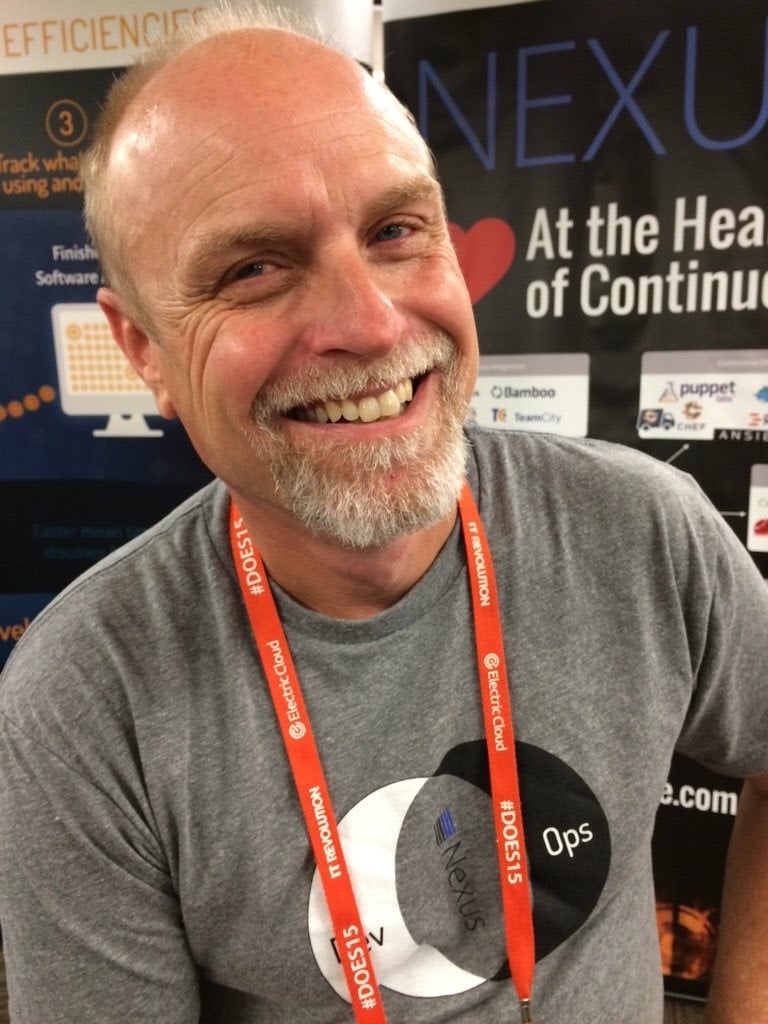

So, as I look at the landscape today and compare it to 3yrs ago, when I made the move from corporate delivery team to Sonatype, I get really excited about how things have changed in such a short amount of time. Converged supply chains combined with automatable modern tools to inspect our deliverables give me confidence in delivery teams ability to execute at speed with quality. Increased awareness and visibility for upstream suppliers provides coverage across the whole supply chain. The age of containers is here and it's a really exciting time to be in IT.