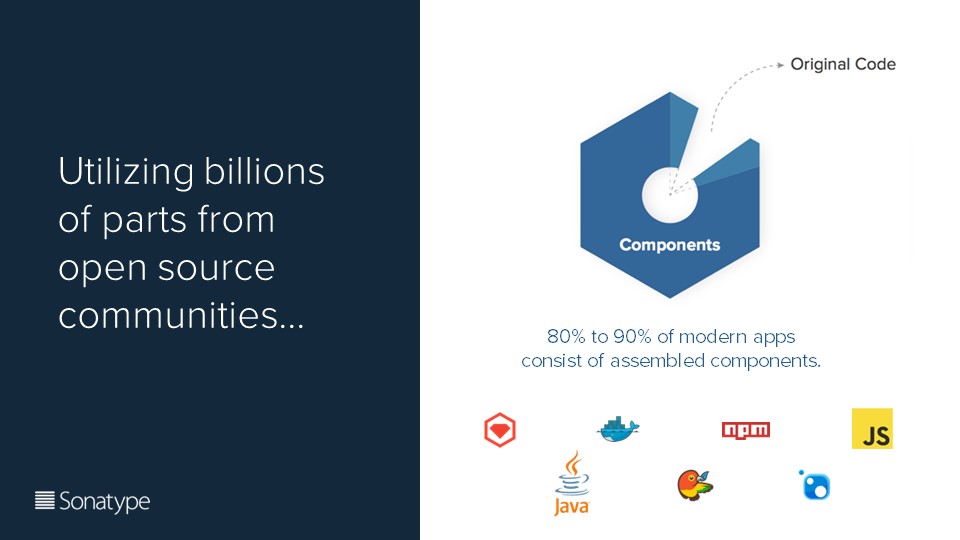

The universe of open source is exploding.

This should no longer be a surprise to most developers, and hopefully not to most companies. Neither should the fact that 80 - 90% of most modern applications are comprised of open source components.

While I believe we’ve all become comfortable with the notion of open source, there are still many questions, and concerns, around how open source should be managed - and for good reason. Just to name a few of them:

-

These components are not created equal. Some are older, vulnerable, or incompatible with each other.

-

Usage has become more complex. With tens of billions of downloads, it’s increasingly difficult to manage.

-

Transitive dependencies that you may not be aware of are pulled in as well.

That’s why, at this year’s Nexus User Conference, Chris Carlucci, lead customer success engineer at Sonatype, dissected what organizations are doing to better manage, track and monitor open source consumption.

First, he asks us to consider how Toyota makes cars. They use supply chain principles to source high quality and trusted parts. This allows them to determine risk quickly, and in turn, that allows teams to deliver faster and with less technical debt. Application teams can take advantage of these principles as well.

Furthermore, when considering application teams, Carlucci wants listeners to realize that many don’t pull dependencies from centralized storage. They pull in components from the internet each time. If teams switch to using a repository manager, the dependencies are brought down and cached locally. This allows these dependencies to be scanned, pulled into the pipeline, and reused by all teams.

Next, when thinking about appropriate integration requirements you need to be fast and precise, and you need to keep context in mind. Integration requirements should also be actionable and continuous. Everything going to production needs to be approved; and then, monitoring validates things are working right.

So where can we add in integration points? First, consider policies on components. The next integration point involves the IDE—it should drive integration before code is pushed to source control. Following that is the build process—you should know what’s going out in each and every deploy. And finally, you need to continuously monitor the app.

Continuing on to roles and responsibilities, Carlucci wants us to consider who’s involved in integration: policy, integration, and lifecycle participants. It takes every one of these to define and follow the guidelines that build better components. And, this starts with putting the right people in the right spots.

We also have to consider the adoption model. This involves finding the number of applications, determining how they’re integrated, looking at organization structure, and setting roles and responsibilities. There are two approaches to this: (1) the centralized approach relies on administrators reaching out to individual teams. This becomes cumbersome. (2) A preferred alternative is the federated approach where sponsors socialize adoption units and teams own the integration process.

So what is a policy? Carlucci dives into its purpose, using no-smoking signs and speed limits as examples of policies that we’re familiar with from our daily lives.

In our context—namely, building better component guides—policies are sets of guidelines that define traits of vulnerabilities and relative risks. Additionally, they define what action is required.

For example, policies can help identify security vulnerabilities and license risks. The license risks can keep you free of obligations and requirements that could harm your company or product.

Then, there are architecture policies like component age, popularity, cleanup, and external tool metadata, that need to be consider. Of great importance is considering the match state of a component. I.e. Is the component an exact match to what’s expected, or is it just similar? That could indicate a hacked or bad component.

Now that we know what to look for, (and how to find it) what do we do when we uncover something?

We mitigate.

First rule of thumb - communication, communication, communication. This is vital. If everything feels critical, then nothing’s critical. Define what’s truly important so you know where to focus. And when to raise the red flag, versus the orange one. Later on, you can look at technical debt or less critical issues that have been identified.

Having the policies however, is only half the battle. Without enforcement, the best policies don’t mean anything. Carlucci shared a few things to keep in mind to make the policities you’ve worked so hard to build, actually stick:

-

Culture and maturity: some companies have a fail-fast culture, but others aren’t there yet. Define enforcement that fits the culture.

-

Timing of violation notifications: it’s best to warn early and fail late.

-

Promotion of component selection. Developers should be empowered to find what other versions are available, what versions are popular, and the risks involved.

At the end of the conversation, Carlucci stresses that building good component practices involves a cycle of discovery, inventory, policy, mitigation, and enforcement. However, it’s important to keep in mind some common challenges many organizations are faced with:

-

Failure to embrace change

-

Organizational change

-

Weak executive support

The most successful organizations overcome these challenges.

For more details on building out better component practices, you can watch the presentation here. You can view all sessions from the 2018 Nexus Users’ Conference, held in June, here.

About the author Sylvia Fronczak:

Sylvia Fronczak is a software developer that has worked in various industries with various software methodologies. She’s currently focused on design practices that the whole team can own, understand, and evolve over time.