Editor’s note: This is Part Three of a four part series, talking with Bryan Batty, Director of Product and Infrastructure Security at Bloomberg Industry Group. In Part Two, Bryan shared his thoughts on pipelines and SBOMs. In this section, Bryan discusses ongoing experiments and how to measure successful initiatives.

Editor’s note: This is Part Three of a four part series, talking with Bryan Batty, Director of Product and Infrastructure Security at Bloomberg Industry Group. In Part Two, Bryan shared his thoughts on pipelines and SBOMs. In this section, Bryan discusses ongoing experiments and how to measure successful initiatives.

"If we are able to get those numbers down, then eventually there will be less time we actually spend remediating security and more time building security into the application." -- Bryan Batty

Learning From Surprises

Mark Miller:

What did you learn recently that surprised you?

Bryan Batty:

Gosh. I had my first kid in 2019. So there are a lot of things where I said, "Whoa, I didn't know they could do that!" But I assume this is scoped to technology. The Capital One hack surprised me. They are a leader in cloud adoption and they're very good at security. Seeing that was a big shocker.

Mark Miller:

Did your team go back and look, based on that, and say, "Is going to affect us"?

Bryan Batty:

Oh, I think everybody who's worth their salt has done that across all technology organizations. We're looking at different encryption methods and we're not very mature in the cloud just yet. We're getting there. We've made great strides in the past year and a half.

The Capital One breach was early enough in our adoption where we could say, "Hey, we don't have to go back and look at hundreds, or thousands and thousands, of S3 buckets and see what's going on." We're building this now. So this is a good opportunity for us to look at our current practices.

Experimenting With Physical Proximity

Mark Miller:

What is your team working on this year? What do you hope to accomplish?

Bryan Batty:

I alluded to it earlier with the experimentation of putting security professionals in with the developers. That's something that we're making headway on now.

Mark Miller:

In the same room? At the same table?

Bryan Batty:

Yes, in the same sprint meeting. Not necessarily sitting there 24/7, but having them be in the same sprint planning sessions, the backlog grooming sessions, the sprint reviews. We want them working along with developers and maybe even doing some code check-ins. I don't know if all security professionals are comfortable with that.

I don't know if all development leads are comfortable with that either, but in some distant future, I envision being able to move people around into different roles. In a previous company, we've experimented with that. I was on a “DevOps team” and I know that that's an anti-pattern, but that's what it was. Having a separate team called DevOps is the anti-pattern. We were really Ops, infrastructure-as-code.

The developers are producing the features into production. We had developers move on to our team and we moved on to a developer team, so we had some rotation there. It was a good experiment, but it doesn't really work in practice, because people decide what they want to do for a reason. They want to focus on what they're doing.

A Ruby developer wants to be a Ruby developer. They don't necessarily want to look at call information. Same with a security professional and AppSec person that adapts that, maybe because of the pen testing. I got into it, because I was a developer. I was more of a defender rather than a breaker. I was a builder that turned into a defender. I think I could more easily move into that role than other people. I've seen a lot of resistance though.

Measuring What's Working

Mark Miller:

When we’re looking at giving security a seat at the table in the development realm, I'm always trying to find the measurements. At the end of the day you're going to have to prove to the business that this is better than what we were doing before. What do you use as measurements to say, “This is working”? Is having security on my team working?

Bryan Batty:

We're just starting to track average time to remediation. I'm just starting to see the results. It was probably the end of the first quarter of 2019 that we started to track it. We're starting to see the average time to remediation of a security vulnerability.

That's something that we can really move up. We can see how long a security ticket has been assigned to a development team. I can point to them to say, "Hey, you guys worked on this for a long time.” If we are able to get those numbers down, then eventually there will be less time we actually spend remediating security and more time building security into the application.

Mark Miller:

How do you measure rework? If you've done it right, you're going to do away with the whole category called rework. If you electively introduce a known vulnerability, whether you do it within code or not, and then it's reviewed later and someone comes back to and says, "Hey, this thing was bad from the beginning." That's part of rework. It does play in the meantime to remediation, but the developers focus on, "Well, I have my next features or functions, I'm trying to build into this application.”

How much of a priority is this rework or remediation effort from a security perspective?

Bryan Batty:

There is some overlap. A vulnerability could be there as we work or it could be just a newly discovered vulnerability. That's not necessarily rework. It's just something that they would have had to do, because nobody knew it was a vulnerability in the first place.

If developers did build quality code, there would be no need for an application security team on one hand. However, that's like noOps. It's noSec. Security knowledge and skills are distributed throughout the organization and the security team doesn't necessarily need to do a whole lot, if there is a security team at all.

That's an anti-pattern. It's something that sometimes naturally develops. Some developers are very good and I certainly wouldn't want to take it away. I wouldn't want to throw a wet blanket over a developer who's hot on security, but still wants to stay in their role. If you have to be my champion, please help me help you. People like that do make my job a lot easier. They're already building great applications. My job is to monitor and continue to help educate.

In my organization we have development teams of varying maturity levels, as far as security goes. One team I had, looked at our tools, "Hey, it's looking great.” I look at them once a week. I get alerts when a critical component comes in, but that almost never happens. Other teams I need to spend a lot more time with, offering a little bit more security education and security awareness.

Recognizing Competing Goals

Bryan Batty:

As far as having an AppSec team disappear completely, that would be a very, very bad thing, because at the end of the day, who’s feeding the person. A developer? Their goals are features. Their goals are to please their boss, get their scratch behind their ear, and put features into production.

Their boss's goals or the business lead’s goals, are revenue. They're trying to get market share. They're trying to differentiate themselves from their competitors and increase the company reputation. There's nothing in there about security. Now when it comes to reputation... we're starting to get a little bit closer, because if you have a security breach there goes your reputation.

The business is concerned about that so they don't have a Capital One situation, where they're on the front page news and people are referring to them like they did Equifax. So at least have one person whose sole job is to advocate for security and monitor the progress therein. Yes, it's great if developing teams can work really hard at security and basically run themselves, but at the end of the day, that's not their goal.

Mark Miller:

That's not what they're compensated for.

Bryan Batty:

Right, exactly. Compensation is the number one thing. They want to get that year end bonus. We can work things in where, "Hey, how many security vulnerabilities did you remediate this year? What kind?" It's not just how many. To quantify the value of security efforts, it takes a lot more than just how many tickets did you resolve, but that could be a start. There could be a program where the developer gets kudos for something like that. Not just kudos, but kudos that are directly tied to their compensation.

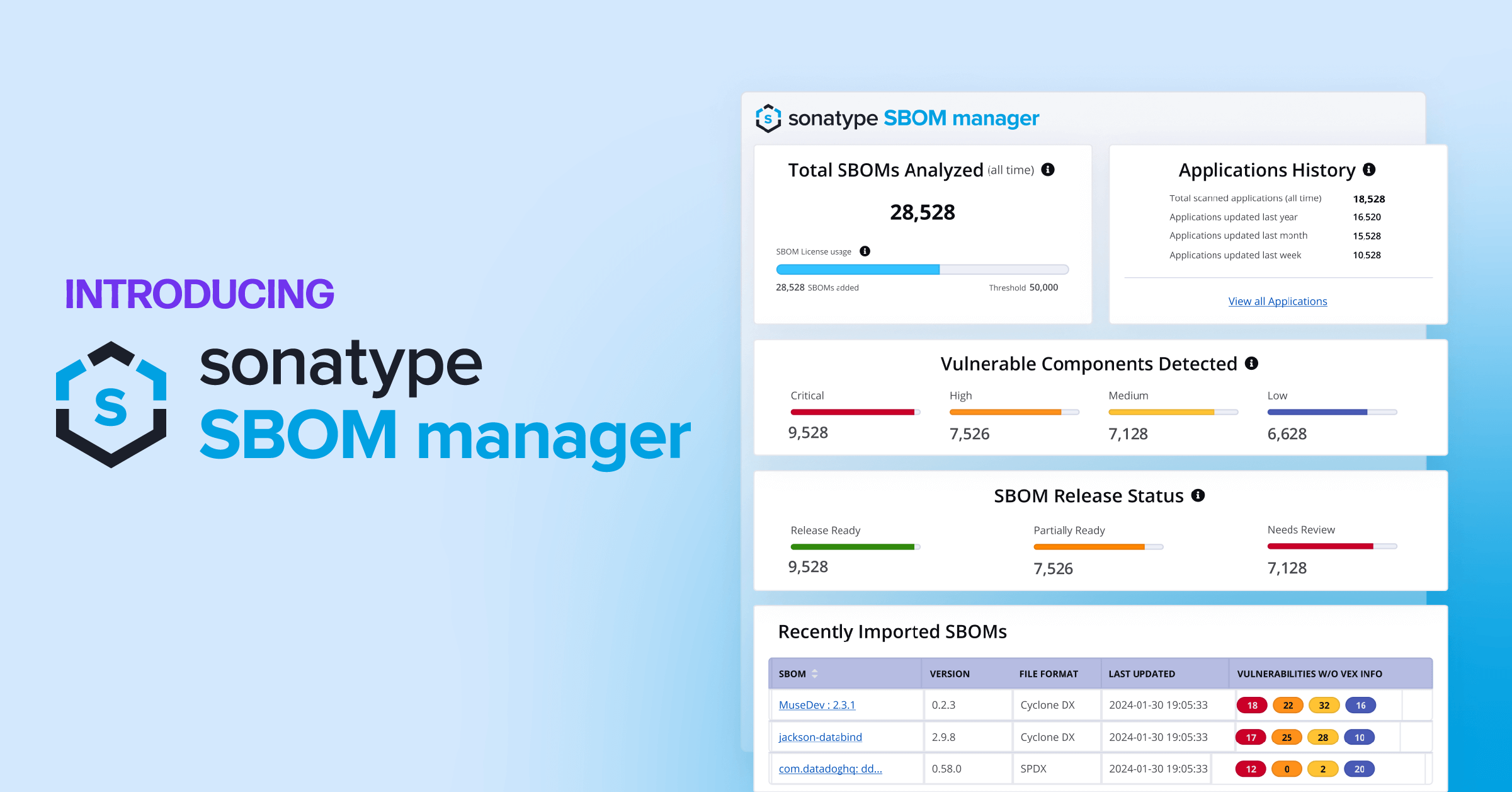

This is Part Three of our discussion with Bryan Batty. Catch up by reading Part One, where he describes using Software Composition Analysis (SCA) tools. In Part Two, Bryan discusses his ideas around making security an essential part of the software supply chain. Finally, in Part Four, he explains why he selected the Sonatype Platform.